Meta-Analysis: Less Boring Than It Sounds?

“Meta-analysis” describes a statistical method that collates data from a number of sources and gives an answer more accurate than any of the single individual sources could alone. It doesn’t sound all that interesting, but it can give a pretty neat insight into virtually anything; as we shall see…

Recently I was put on to a web site called Chart Porn by someone with genius tastes. As I’ve mentioned here before I am a fan of graphs, in fact I don’t believe it is possible not to like graphs, unless you are an actual moron.

How can you not relish the wonder that is thousands of data points described in a colourful icon that you can understand without hardly moving your eyes or brain?

I like stats, I’m certainly no expert, I don’t know all the proper words and I certainly couldn’t work a stats software package. But, to me, there is something mind expanding about trends, flows and averages. Call me “Mr Sad Man” if you like, or even “Little Miss Boring” – I do not care. The truth hurts but I am bigger than that now.

Stats can be misused though. And that makes everyone, including Buddha and Moses cry. I did it myself once by mistake. I used to work for a large utililities company, doing basic stats about the reasons why people called in to their customer service lines and what happened when they did.

Riveting stuff, but it gave me a chance to fiddle around with spreadsheets and graphs; and that, I reveled in. One day I was fiddling about in a report that showed whether complaints were sorted out on the first phone call or whether they dragged on and generated further phone calls.

I was bored, as you might imagine, and decided to painstakingly go through all of the data and put in a Male/ Female column. That way I could find out whether boys were better than girls at handling complaints. Like I said, I had time on my hands.

Once I’d finished all the jiggery pokery, the results showed that blokes handled complaints on the first call about ten times as often as girls; the ladies, on average, took a few calls to sort things out. I found this amusing, so I e-mailed it to my boss (male) just for ships and wiggles. He found it very interesting and immediately e-mailed it to his superior (female). It leaped from their inbox to the head of department’s in-tray within 24 hours.

Suddenly, I am being discussed at a high level. It was only a week from that point until it reached Director level and was flaunted as a fascinating bit of work from our department. By now I was very uncomfortable, I had made the graph with little regard for checking, let alone double checking.

So, I looked back on the data I had used and it turns out I should have checked it before I sent it. There was one idiotic guy in the study who claimed to have sorted out the complaint on the call every single time. Whereas, everyone else had told the truth.

Once you removed him from the data, there was absolutely no difference between males and females. Whoops. But thankfully, no one ever came and asked for my workings.

There are probably Directors swilling Brandy at Christmas earnestly discussing that erroneous result to this very day. And that is just my humble experience of bad stats and the effect they can have.

The billy big balls in this business sphere do not have the time or inclination to check things. Other people should do that. But unfortunately, it’s hung over chimps like me that are making these basic errors and causing misinformation on a hideously global scale. Double whoops.

Always double check people. As Vic Reeves once said:

“87.3% of stats are made up on the spot.”

I’m sure that’s accurate, but remember the other 12.7% weren’t made up on the spot, they were worked out incorrectly by an “expert.” So where does that leave us?:

META-ANALYSIS:

In a nut shell a meta-analysis is where you get a whole bunch of experiments that test the same thing and bang all the results in to one big graph train. So you get more accurate results because the dip sticks are diluted.

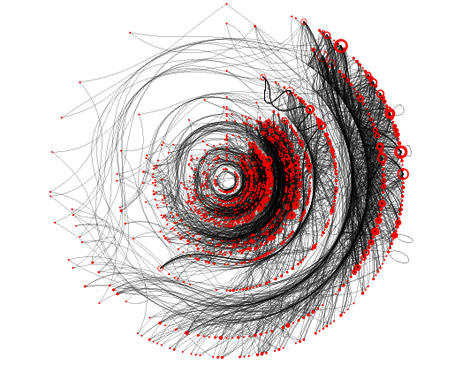

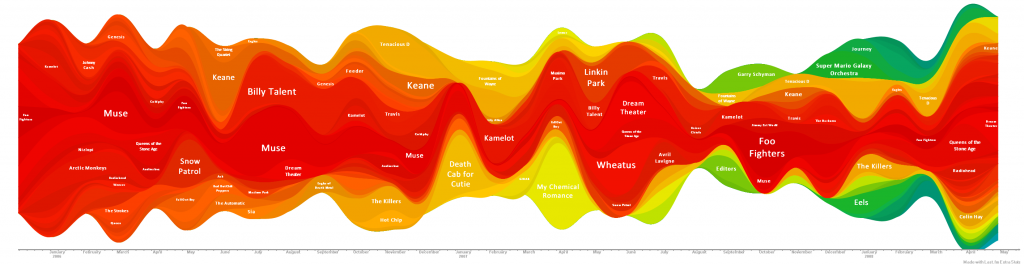

I came across them recently on the Chart Porn site. Someone had compiled 120 of Top 10 charts for the best album of 2011. And you know what, there was good stuff in there, stuff I didn’t know, stuff I’d forgotten. And it gave me an incline of hope that humans are not doomed after all.

If you were to believe MTV, Kerrang or The Chart Show, we would be listening to Mariah Carey and The XX across the globe and that’s that. Here are the albums that won in the meta-analysis.

Have a listen if you don’t know them, I did and found some stuff that I am still listening to. You are probs totes cooler than me though and already own them all in triplicate. Kuodos my friend:

It’s not all awesome obviously, but it’s not necessarily expected either. One day we will beat Pop for ever.

So I thought I’d see if I could find any other interesting meta-analyses to wet my number whistle. To be honest, I didn’t find any. Some dude did a Meta-analysis of the Top Bluegrass Albums of 2011 and the winner there was The Gibson Brothers – “Help My Brother.” I listened to it and it was rubbish, well not the whole thing, just about 10 seconds on Youtube, but it still counts.

I found a meta-analysis for the Miss Universe 2011 contest, here’s the blurb:

“Miss Universe 2011 is just less than 48 hours and a new queen will be crowned. However, before the coveted crown is given, let us make a meta-analysis of all the candidates based on the lists that were posted in the Miss Universe Forum of this pageant site. There’s 89 contestants in total”

The list below is their predictions and, in bold, are the predictions that were correct once the real life competition had been completed:

- 1. CHINA

- 2. VENEZUELA

- 3. MALAYSIA

4. PHILIPPINES

5. PUERTO RICO

6. AUSTRALIA

7. GREECE

8. FRANCE

9. USA

10. UKRAINE

11. PERU

12. COSTA RICA

13. PANAMA

14. MEXICO

15. KOSOVO

So they got 10 out of 15 right. Not bad, but also not interesting. Next please: How about a meta-analysis on the most popular sports? How fascinating – BOOM! HOLD ON TIGHT!:

Sports top 20:

1 soccer

2 cricket

3 basketball

4 baseball

5 volleyball

6 tennis

7 field hockey

8 american footy

9 table tennis

10 ice hockey

Wow. If you liked that you’ll love this: “Virginia Wine Top 20 Summary and Analysis: Most Popular Wines (without vintage)”. I won’t even tell you who won, there’s no point at all. One more for luck. I quite liked this one, it’s kind of sinister:

“How Many Scientists Fabricate and Falsify Research? A Systematic Review and Meta-Analysis of Survey”.

Considering there’s so much room for error by the chimps at the bottom of the tree like me, it seems terrifying that on top of that you have people purposefully freakin’ with the stats. Here’s an excerpt:

“the analysis was limited to behaviours that distort scientific knowledge: fabrication, falsification, ‘cooking’ of data, etc…

A pooled weighted average of 1.97% of scientists admitted to have fabricated, falsified or modified data or results at least, and up to 33.7% admitted other questionable research practices. In surveys asking about the behaviour of colleagues, admission rates were 14.12% for falsification, and up to 72% for other questionable research practices.”

On top of that, when certain factors were controlled for, misconduct was reported more frequently by medical/pharmacological researchers than any others! Uh oh!

This is my favourite, clinical, yet chilling sentence:

“Considering that these surveys ask sensitive questions and have other limitations, it appears likely that this is a conservative estimate of the true prevalence of scientific misconduct.”

In conclusion: everyone down here on this lovely little planet is a moron and we’re lucky that we hear anything true at all. Always question the numbers.

GOOGLE STATS: ARE DOGS OR CATS MOST POPULAR?

TAP NEXT TO 24HZ SOUND – ODD VIDEO